Computing Geometric Measures of Melodic Similarity

Introduction

Choosing a Measure / Metric

Orthogonal Chains

Computing the Difference for Repeating Melodies

Overview of Algorithms for Minimizing Melodic Difference

With

respect to z

With

respect to z and Θ

Java Applet

Applications

Bibliography

The most instantly recognizable element in most pieces of Western music is melody, the sequence of pitches of specific durations that gives a perceptual contour to the music. We can thus say that two pieces of music are “similar” if their melodies are “similar.” In geometry and other branches of mathematics, we often have concrete measures of the similarity of two objects that are in the same class but not identical. For example, the relative similarity of two real numbers can be computed as their difference, or the square of their difference. The “similarity” of two functions over some period might be computed as the unsigned integral between them over this period.

But how to measure the difference between two melodies, artistic creations that are constrained in their construction only in that they can consist only of sound? If we can convert melodies into some geometric model, we can then use methods in Computational Geometry to arrive at a meaningful, quantifiable measure of their similarity or difference. The quality of this measure depends on both the geometric model and the measure used.

Meaningful Measures / Quantifiable Measures

One meaningful measure of the similarity between two melodies is statistical data from a polled audience who are played several pairs of melodies and asked to rate their relative degrees of similarity. It is this similarity that we hope to measure, but without the random elements, dependence on cultural background, and expense of polling. Whatever model for representation and measure of similarity we choose, we hope that it produces results roughly similar to these psychoacoustic tests. We also need our measure to give quantifiable results so that pairs of melodies can be said to be “more similar” or “less similar” than other pairs of melodies. Choosing a geometric model of melody and using a computer to operate on it will give such quantifiable results.

The Orthogonal Chain as a Model for Melody

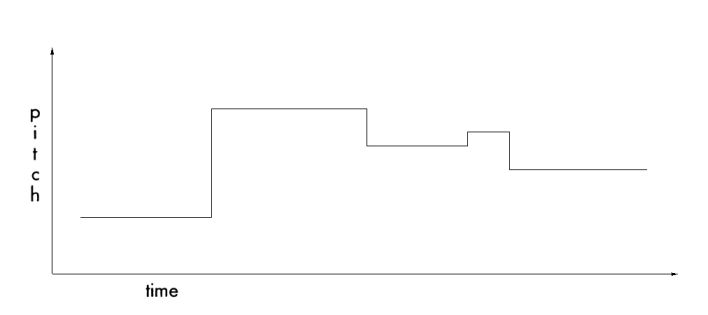

First proposed by Ó Maidin in 1998, the “area between orthogonal chains” method presents a geometric model of melodies and a difference measure between the two [4]. Melodies are converted into alternating chains of horizontal and vertical line segments that describe the melody as a pitch vs. time function. This representation is reminiscent of antique piano rolls, long strips of paper that have slits cut out to allow a pneumatic “reader” to convert the pattern into keystrokes.

Fig. 1 - A melody modeled with an orthogonal chain

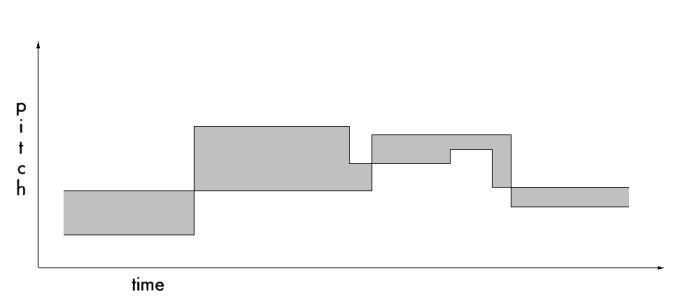

The difference between two such melodies can then be described as the magnitude of the unsigned area between two such orthogonal chains. The gray area in Figure 2 corresponds to this difference. It should be noted that this is not simply the integral function for the two broken lines, as there is no “negative area” here. Otherwise one melody would be able to compensate for spending too much time below the first by spending a period above the first, and perceptually this does not make two melodies more similar.

Fig. 2 - The difference between two melodies as the magnitude of the unsigned area

Computing this difference is trivial, but finding a minimal area, given the fact that the melodies can be shifted in the x (time) and y (pitch) directions and still be considered to have the same perceptual similarity, is more difficult. Two melodies that are identical except for the fact that one has been transposed (moved up in pitch) and/or moved over in time by some arbitrary constant should be found to have a difference of zero. Therefore the melodies must first be shifted in both axes until their area is minimized. Another problem is scaling: If two melodies are at slightly different tempi (speeds), they will need to be scaled in the x direction to find the minimum difference (for perceptual reasons, the same does not apply for pitch scaling).

Computing the Difference Between two Repeating Melodies

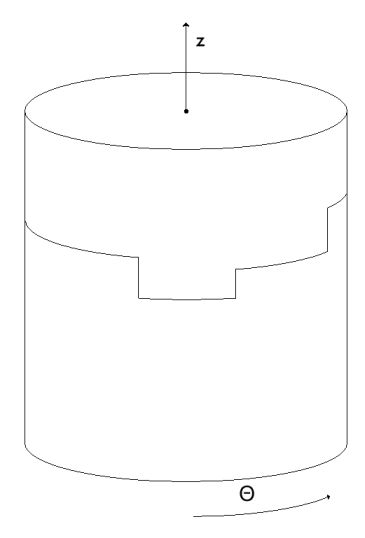

In the musical traditions of certain Eastern cultures such as India, Africa, and Southeast Asia, melodies are often cyclic. They are repeating chains that have no defined beginning or end. Unlike most Western music, a better model for these melodies is not a planar 2-dimensional surface but a cylindrical wrapping of such a surface. The pitch variable is then associated with the z-direction, which passes through the cylinder's central axis, and the time variable is replaced by the angle measure Θ.

Fig. 3 - A repeating orthogonal melody wrapped around a cylinder

Thus the problem of finding the difference between two repeating melodies of the same length is the same as finding a vertical shift Δz and an angular shift ΔΘ that minimize the area between two melodies Ma and Mb.

In 2003, Aloupis, Fevens, Langerman, Matsui, Mesa, Nuñez, Rappaport, and Toussaint [1] proposed two algorithms to solve this problem: one that allows the melodies to be shifted only in the z direction, and another that allows them to be shifted in both z and Θ directions.

The first algorithm keeps one melody, say Ma, fixed, while the other one, Mb, is swept vertically from a point below where it is disjoint from the first melody. The difference between the first horizontal segments of the melodies is called z. [1] includes the proof that the minimum sum of the areas in the quadrangles defined by the orthogonal chains must occur at a “z-event,” a positioning of the movable melody where two horizontal segments overlap. See the applet for a demonstration. To find the positioning of Mb that minimizes the unsigned area function, it is sufficient to find the set of z-events and their corresponding w's, where w is the width of the intersecting parts. The minimum is the weighted univariate median of the z's weighted by the w's. This mean can be computed in O (n) time. Once the optimal position for Mb has been chosen, the area can be computed in linear time.

Area Minimization along z and Θ

The addition of a second degree of freedom makes the problem of finding a positioning of Mb that minimizes the area function considerably more complex. The simplest approach is to observe that as the melodies are shifted in the Θ direction, they experience Θ-events that are roughly analogous to the z-events. When searching for the minimum, the only events that need be considered are the z-events and Θ-events, so the original algorithm can be applied O (n2) times for a total running time of O (n3).

In [1], the authors present an algorithm that finds this area in O (n2 log n) time. It places the z-events and their weights into a balanced binary search tree, and stores at each internal node five pieces of information: the number of leaves below that node that represent a growing quadrangle area, the sum of the weights over those leaves, the number of leaves below that node that represent a shrinking quadrangle area, the sum of the weights over those leaves, and the sum of the weights of the remaining leaves. The weighted median can then be computed by traversing the tree from root to leaf, which is done in O (log n) time.

Shifting Mb by some offset in Θ requires updating the tree only when a quadrangle is created or destroyed (when vertical edges collide). Using this method, the median and area difference can be computed in O (log n) time, therefore the running time of the algorithm shrinks from O (n3) to O (n2 log n).

This applet demonstrates minimization with respect to the z direction. The fixed melody in red is the opening two measures of the right hand of Mozart's Piano Sonata K 545, mvmt 1. The movable melody is the opening two bars of the first violin part for “Ase's Death” from the Peer Gynt suite by Edvard Grieg.

z-events occur at

z = {-7, -6, -5, -3, -1, 0}

If these events are weighted according to the total width of the incident horizontal segments, the following is a minimal distribution list:

zweighted = {-7, -7, -7, -7, -7, -7, -7, -7, -6, -6, -5, -5, -5, -5, -5, -3, -3, -3, -3, -3, -1, -1, -1, -1, 0, 0, 0, 0, 0, 0, 0, 0}

Note that the median of this list, which is the weighted univariate median of all the z's with weighting done by the respective w's, is

median ( zweighted ) = -3

In accordance with the proof in [1], it is at this z-value that we observe the minimum sum of the areas:

min ( A(z) ) = 20

For the purposes of this applet, the units of time are quarter notes (the Grieg has been time-scaled so that 2 measures of “largo” correspond to 2 measures of “allegro”). The units of pitch are semitones, and the orthogonal chains used above are perfect replicas of the original scores. The units of area are therefore “semitone-beats” where a horizontal segment differs from another by one semitone-beat if it is a semitone above or below the other and both segments are one beat long.

The advent of sampling in popular music has raised questions of authenticity and found many artists arguing highly subjective cases over how similar or different a new work is from an old one. If members of the music industry can be made to agree on a standard measure of similarity, these disputes can be resolved much more easily.

Musicological research can also be aided by such a measure; analysis of works (repeating motives), quantification of the relative influence of a composer, and evaluation of the prevalence of certain melodic fragments in the written music of certain time periods can all be made easier with such a computational tool that produces hard, numerical evidence.

Perhaps the most exciting possible use of these measures is Query-by-Humming, or QBH [2]. At present, a person searching for a piece of music in the vast catalogue of existing music (both recordings and scores) must supply some concrete information (composer, date, record label, year, etc.) that is used to scan the header information of some database. A QBH system allows them to hum a single melodic line into a microphone (and optionally enter other concrete information to help narrow the search), and will then return a list of search results, pieces of music that contain a melody that is relatively similar to the entered query. This system has yet to be implemented successfully because, among other reasons, it requires a complete library of searchable music in some format where the individual melody lines are separated (MIDI, for example) and conversion into such a format is time-consuming and error-prone. It is also difficult to account for slight errors in pitch and note duration in the hummed sample, especially considering that a working system should not assume the user to be a trained musician.

[1] Aloupis, G., Fevens, T., Langerman, S., Matsui, T., Mesa, A., Nuñez, Y., Rappaport, D., and Toussaint, G. Computing a geometric measure of the similarity between two melodies. In Proc. 15th Canadian Conference on Computational Geometry, Dalhousie University, Halifax, August 11-13, 2003, pp. 81-84.

[2] Cristian Francu and Craig G. Nevill-Manning. Distance metrics and indexing strategies for a digital library of popular music. In Proc. IEEE Int'l Conference on Multimedia and EXPO (II), 2000.

[3] L. Hoffman-Engl. Melodic similarity – a conceptual framework. In Proc. 2nd Int'l Conference on Understanding and Creating Music, Naples, 2002.

[4] D. Ó Maidín. A geometrical algorithm for melodic difference. Computing in Musicology, 11:65-72, 1998.

Page last updated 2004-04-30 at 23:00 EDT by Ian Ratzer